Do you remember the first computer to arrive in your home? I do. I was 17, so this was 1984: interesting coincidence of year, although this device would not be watching us as we used it, per George Orwell’s novel, 1941, published in 1948.

This was a Commodore 64. It had (gasp!) 64 KILOBYTES of RAM memory… 16 colors (not 16 million) and a 200 by 320-pixel screen. Wow. It had actually floppy disks that you could put into a slot in it to play games or use programs. These disks were squares of plastic 5.25 inches on a side, and each of them held 700 KB of data. The really cool hack was that you could take 2 of them back to back, and using SCISSORS, carefully cut out an extra notch in each, positioned where the other disk’s notch was. This DOUBLED a disk’s capacity. Pow.

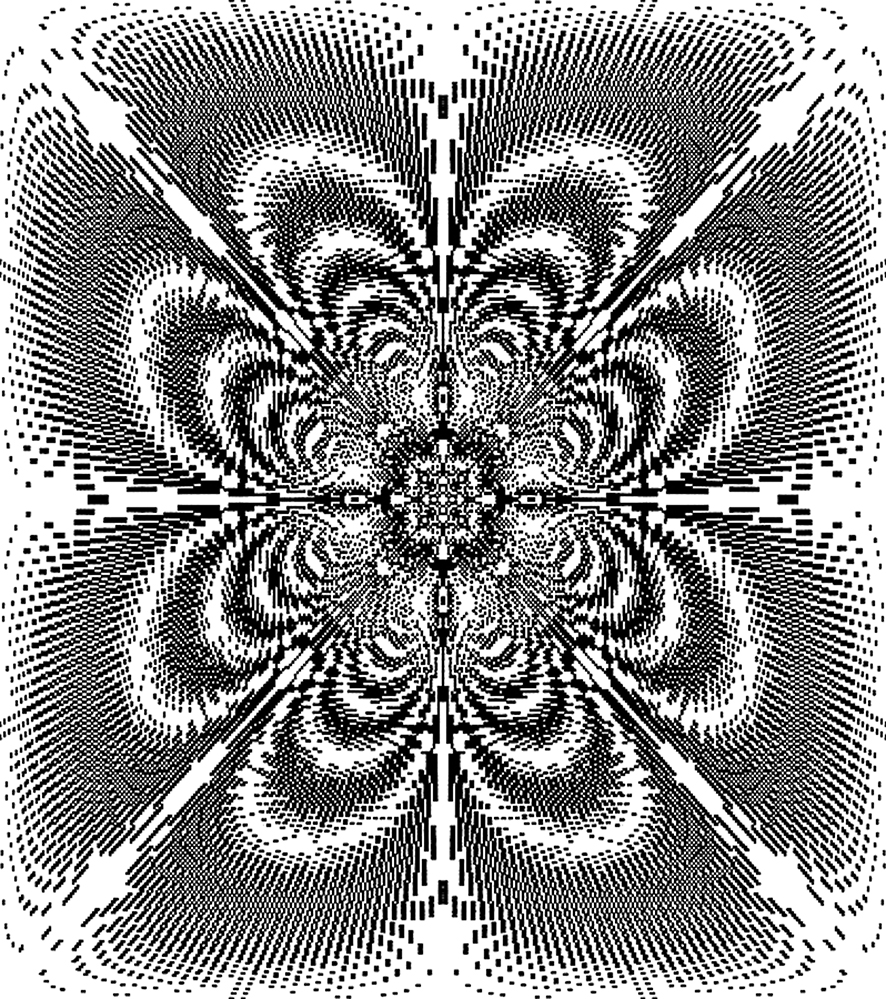

For my 18th birthday, in January 1985, my sister and her husband gave me a graphics program for the C64 called Doodle! Now, you might think, what kind of graphics could you come up with using such a low-spec system? There weren’t yet even any vector graphics, so it was all very clunky, pixelated and primitive. But I took it and ran. This might be the first instance of my taking a program and going far outside the manual with it, into uncharted territory, testing it past its defined limits.

Forget the 16 colors: I stayed with black and white, no grays even. But I soon learned that you could make a line, say from corner to diagonally opposite corner… then move only one endpoint of that line a single pixel (or both, if you wanted), and draw another line. The line being made of shorter horizontal or vertical segments, which were quite visible given its coarseness, you could then specify how you wanted these two lines to interact. Would shared black pixels stay black, or go white? Then you’d increment the line, 1 pixel at a time. If you had chosen for overlapping black pixels to cancel each other out to white, a pattern would emerge as the screen’s rectangle filled up with lines. I can’t prove it at this point, but I believe the patterns were my first fractals, although I wasn’t setting out to make these, nor did I even know yet what fractals were. (A new word in mathematics, coined by Benoit Mandelbrot in the 1970s, from the Latin for “broken”. It would take quite a while to percolate into math education).

I was off, making hundreds of these weird monotone abstract images and saving them to my hacked double-storage floppies. They’re still around, in my parents’ storage of my things in Canada, maybe in a completely obsolete file format which retro/nostalgia specialists can rescue for me, for a fee.

The simple way I made these images fascinated me, and I had never seen anything like them before. Soon I started working for a screen printing company, while still in my last year of high school. I put together a whole exhibition of my work in an actual gallery in my small town: dot-matrix printed, then screen printed in darker black ink on better paper. It was a blast.

I look back on those simple computing days with nostalgia, compared to the arrival of the Internet and the flood of (dis)information which bombards us today at the speed of light. As a potential info-junkie, I have to be careful, as Google will do its best to answer anything I ask. Even in rural, high-mountain Svaneti: we have internet up here too, you know! Turn it down, look away from the screen, go for a walk in nature, read a physical book, put on the skeptician’s glasses, breathe.

I did ask the mathematician father of a school friend how many distinct images a screen of 200 by 320 pixels could produce in two colors. The answer? 2^(200×320), or 2^64000, or roughly 10^19264. Given that the estimated number of atoms in the known universe is “only” about 10^100, this number is inconceivably larger. Wow, pow.

Blog by Tony Hanmer

Tony Hanmer has lived in Georgia since 1999, in Svaneti since 2007, and been a weekly writer and photographer for GT since early 2011. He runs the “Svaneti Renaissance” Facebook group, now with nearly 2000 members, at www.facebook.com/groups/SvanetiRenaissance/

He and his wife also run their own guest house in Etseri: www.facebook.com/hanmer.house.svaneti